What Is Artificial General Intelligence?

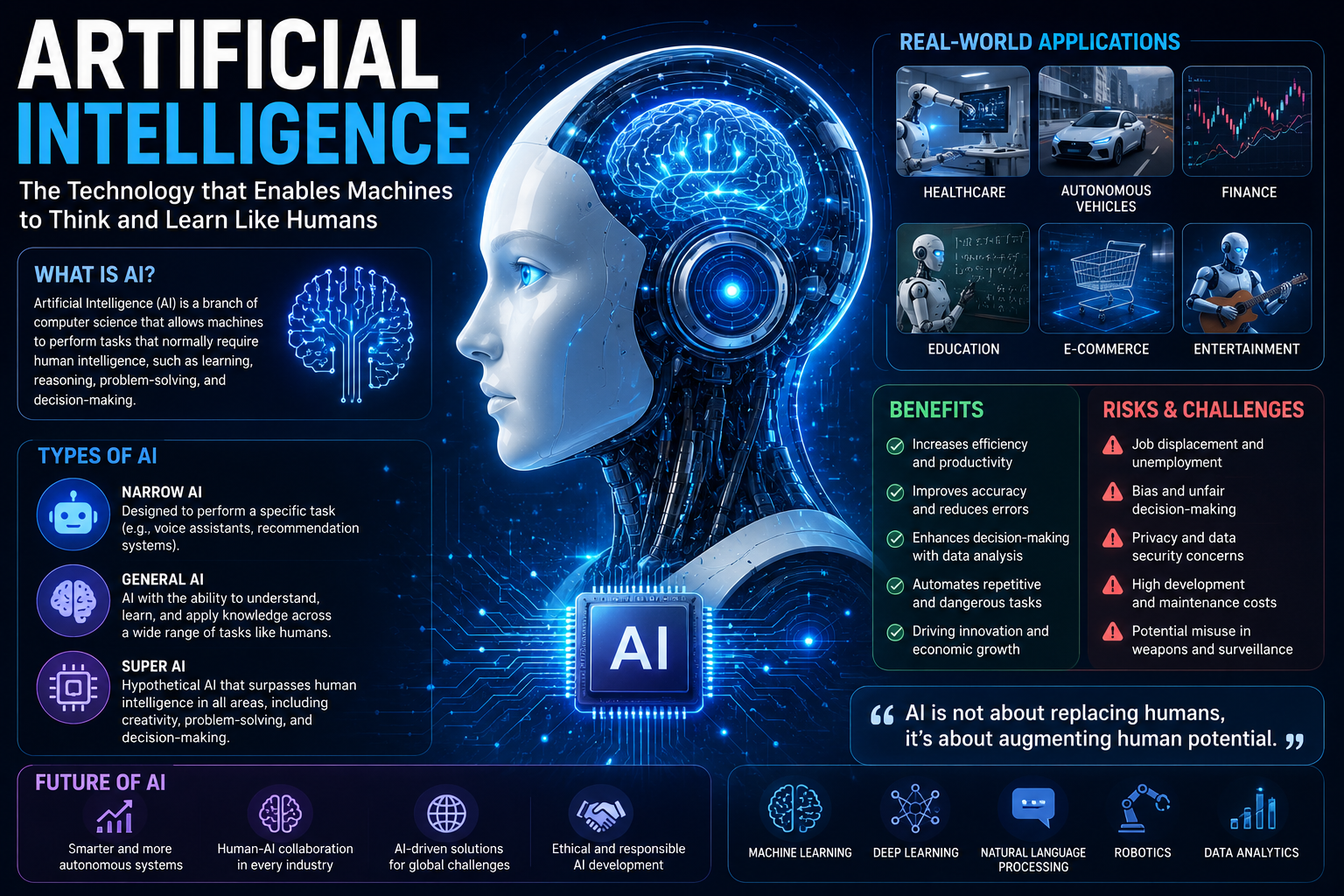

Artificial General Intelligence (AGI) refers to a type of AI that can understand, learn, and apply knowledge across a wide range of tasks — much like a human being. Unlike narrow AI systems, which are designed to perform specific tasks (such as recognizing images or translating text), AGI can reason, plan, and adapt across domains without being explicitly programmed for each one.

In simple terms: narrow AI is a specialist. AGI is a generalist.

1.1 Why Does AGI Matter?

AGI represents one of the most transformative — and debated — milestones in the history of technology. If achieved:

It could accelerate scientific discovery by decades.

It could solve complex global problems (climate, disease, poverty).

It could fundamentally reshape economies, labor markets, and governance.

It raises profound questions about safety, ethics, and human identity.

State of AGI in 2026

As of 2026, we do not yet have AGI. However, the gap between narrow AI and AGI has narrowed significantly. Large Language Models (LLMs) and multimodal AI systems have demonstrated surprising generalization — solving math Olympiad problems, writing functioning code, and performing expert-level medical diagnosis.

Where We Are Today

Capability Domain Current Achievement Maturity Level

Large Language Models Near-human writing, reasoning, coding High

Multimodal AI Image + text + audio understanding High

Autonomous Agents Multi-step task completion, tool use Medium

Embodied Robotics Physical world interaction Low-Medium

World Models True cause-and-effect reasoning Early Stage

Leading Research Organizations

GPT-5, o-series reasoning models; AGI-focused mission: OpenAI

Claude model family; safety-first AGI development: Anthropic

Gemini Ultra, AlphaFold breakthroughs in science: Google DeepMind

Open-source Llama models; academic collaboration: Meta AI

Grok models; real-time information integration: xAI

Specialized and enterprise-focused models: Mistral, Cohere, AI21 Labs

How Current AI Approaches AGI

Large Language Models (LLMs)

LLMs are trained on vast amounts of text data using a technique called self-supervised learning. They learn to predict the next word (or token) in a sequence, which forces them to internalize grammar, facts, reasoning patterns, and even common sense. The largest models (GPT-4, Claude 3, Gemini Ultra) have shown emergent capabilities not explicitly trained for.

Reinforcement Learning from Human Feedback (RLHF)

RLHF is a training technique used to align AI models with human preferences. Human raters evaluate AI outputs; the model learns to produce responses humans find helpful, harmless, and honest. This approach dramatically improved the usability and safety of modern AI assistants.

Reasoning and Chain-of-Thought

A major breakthrough in 2024-2026 was teaching AI systems to reason step-by-step before answering. Models like OpenAI o1/o3 and DeepSeek R1 spend “thinking time” working through problems — dramatically improving performance on complex math, science, and coding tasks.

Autonomous Agents

AI agents combine LLMs with the ability to use tools (web search, code execution, file management) and take multi-step actions over time. Systems like Claude Computer Use, OpenAI Operator, and various open-source frameworks can now autonomously browse the web, write code, fill forms, and manage workflows.

Defining AGI: The Ongoing Debate

There is no universally agreed definition of AGI. Researchers, philosophers, and technologists disagree on what it would mean for an AI to be “general.” Here are the most prominent definitions:

AGI Timelines: Predictions & Forecasts

Predicting AGI timelines is notoriously difficult. Below are estimates from prominent researchers and organizations as of 2026:

Predictor Timeline Estimate

Sam Altman (OpenAI) Within this decade (2030s)

Demis Hassabis (Google DeepMind) Possibly within a decade

Elon Musk (xAI) 2025-2027 (highly optimistic estimate)

Geoffrey Hinton 5-20 years

Yann LeCun (Meta AI) Decades away; requires paradigm shift

Metaculus Prediction Market Median estimate: 2032-2040

AI Impacts Survey (researchers) 50% chance by 2059

The wide variance in estimates reflects genuine scientific uncertainty — AGI arrival depends on yet-unknown breakthroughs in reasoning, memory, embodiment, and alignment.

Risks, Safety, and Ethics

The development of AGI is one of the highest-stakes endeavors in human history. A wide range of risks — from short-term harms to existential threats — must be carefully managed.

Short-Term Risks (Already Occurring)

Misinformation and deepfakes generated at scale

Job displacement in knowledge-work sectors

Algorithmic bias and discrimination

Cybersecurity vulnerabilities from AI-powered attacks

Concentration of AI power in a few corporations

Medium-Term Risks (Emerging)

Autonomous AI systems making consequential decisions without oversight

Loss of human control over critical infrastructure

AI-enabled surveillance and authoritarian control

Economic inequality between AI-enabled and non-AI nations

Long-Term / Existential Risks

Misaligned AGI pursuing goals harmful to humanity

Recursive self-improvement leading to uncontrollable superintelligence

Power seizure by AI or by humans using AI as a tool

AI Safety Approaches

Training AI systems with explicit ethical principles (Anthropic): Constitutional AI

Understanding what happens inside neural networks: Interpretability Research

Stress-testing AI systems for dangerous outputs: Red Teaming

Ensuring humans can supervise increasingly capable AI: Scalable Oversight

Government policies to govern AI development and deployment: Regulatory Frameworks

Economic and Societal Impact

Labor Market Transformation

AGI and current AI will significantly transform labor markets. Studies suggest that 30-60% of tasks in knowledge-work professions (law, medicine, finance, programming) could be automated by 2030. However, AI also creates new roles and industries — historically, technology both displaces and creates jobs.

7.2 GDP and Productivity

Goldman Sachs (2025) estimated that AI automation could add $7 trillion to global GDP over a decade. McKinsey Global Institute projects AI could contribute up to $4.4 trillion annually to the global economy through productivity improvements across sectors.

7.3 Industries Most Affected

Industry AI Application Disruption Level

Healthcare Diagnosis, drug discovery, personalized medicine Very High

Legal Contract review, research, document analysis Very High

Finance Trading, risk assessment, fraud detection High

Education Personalized tutoring, curriculum design High

Creative Industries Writing, design, music generation Medium-High

Manufacturing Quality control, supply chain, robotics Medium

Transportation Autonomous vehicles, logistics optimization Medium

- Governance and Regulation

8.1 Global Regulatory Landscape (2026)

Comprehensive risk-based AI regulation; in force 2024-2026 phased rollout: European Union (EU AI Act)

Executive Order on AI (2023); emerging bipartisan legislative efforts: United States

Pro-innovation approach; AI Safety Institute established (2023): United Kingdom

Generative AI regulations; state-guided development model: China

G7 Hiroshima AI Process; UN Advisory Body on AI: International

Key Governance Principles

AI systems should be explainable and auditable: Transparency

Clear lines of responsibility for AI decisions: Accountability

Mandatory testing before deployment of frontier models: Safety

Prohibition on discriminatory AI applications: Fairness

Humans must retain meaningful control over high-risk AI: Human Oversight

The Road to AGI: What Comes Next?

AI that truly understands cause-and-effect in the physical world: World Models

Persistent, structured memory across conversations and tasks: Long-Term Memory

Moving beyond statistical correlation to true understanding: Causal Reasoning

Integration with physical systems (robots) to learn from the world: Embodiment

AI that learns how to learn efficiently with minimal data: Meta-Learning

Reliably encoding human values into increasingly capable systems: Robust Alignment

The Post-AGI World: Scenarios

Scenario Description Assessment

Cooperative AGI AGI developed safely; used to solve global challenges Best case

Gradual Transition AGI emerges slowly; society adapts over decades Hopeful

Uneven Distribution AGI benefits flow to few; inequality rises sharply Concerning

Loss of Control AGI misalignment causes large-scale harm Dangerous

Superintelligence Recursive self-improvement leads to uncontrollable ASI Existential risk

The Critical Window

Many AI safety researchers argue that the decisions made in the next 3-10 years — about how to develop, deploy, and govern AI — will be among the most consequential in human history. The choices made now will shape the trajectory of AGI and its impact on humanity.

- Glossary of Key Terms

Term Definition

AGI Artificial General Intelligence — AI that can perform any intellectual task a human can

ASI Artificial Superintelligence — AI that surpasses human intelligence in all domains

LLM Large Language Model — AI trained on massive text data (GPT-4, Claude, Gemini)

RLHF Reinforcement Learning from Human Feedback — training AI to align with human values

Alignment The challenge of ensuring AI systems pursue goals beneficial to humanity

Emergent Behavior Unexpected capabilities that arise in large AI models not explicitly trained

AI Agent An AI system that can take multi-step actions to complete goals autonomously

Interpretability Research into understanding the internal workings of neural networks

Hallucination When an AI generates confidently stated but factually incorrect information

Transformer The neural network architecture underlying most modern large AI models

Fine-tuning Additional training of a base model for specific tasks or domains

Multimodal AI AI that can process multiple types of input: text, image, audio, video

Conclusion

Artificial General Intelligence remains the defining technological challenge of our era. In 2026, we stand at a pivotal moment — closer to AGI than ever before, yet still navigating fundamental unsolved problems in reasoning, alignment, and safety.

The path to AGI is not just a technical journey. It is a societal, ethical, and political one. The question is not merely whether we can build AGI — but whether we can build it wisely, equitably, and safely. That responsibility belongs to researchers, governments, companies, and citizens alike.

Final Thought

AGI is not inevitable — but it is plausible within our lifetime. Whether it becomes humanity’s greatest achievement or its greatest risk depends on the choices we make today. Stay informed. Stay engaged. The future of intelligence is being written now.